Author: Phillip C. Parrish, Retired U.S. Navy Lieutenant Commander (Intelligence), Fraud Whistleblower

Date: March 12, 2026

Classification: Unclassified – For Public Dissemination to Raise Awareness

Executive Summary

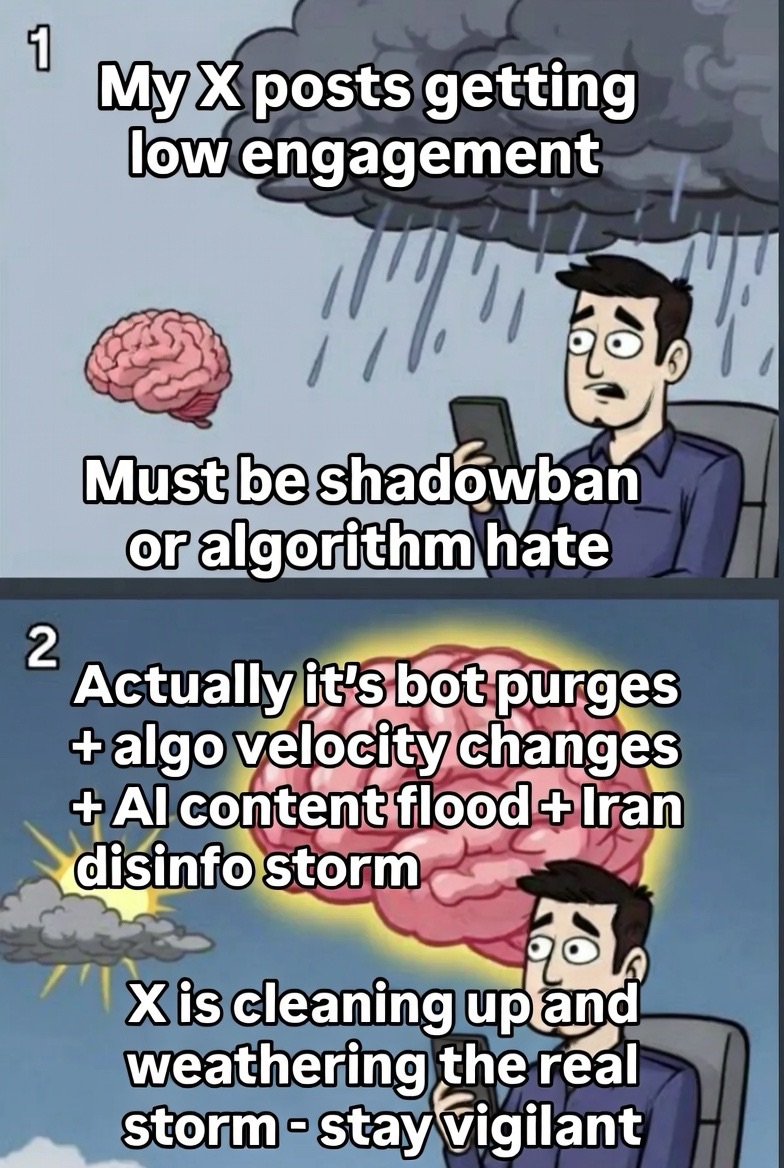

Drawing from my Navy Intelligence background, where information operations often involved navigating floods of data to discern truth amid noise, the current challenges on X reflect a multifaceted convergence rather than a single cause. This includes algorithmic refinements prioritizing early engagement velocity, aggressive bot purges resetting inflated metrics, an explosion of AI-generated content crowding feeds, geopolitical disinformation amid the Iran conflict, and adaptive user behaviors creating feedback loops. These elements combine to create perceived and real declines in engagements, making authentic content appear diminished against falsified or inflated norms.

Platform data and user reports substantiate this: X’s January 2026 algorithm updates emphasize rapid interaction within the first few hours, penalizing slower-building posts. Bot removals, including 1.7 million accounts in early 2026, have scrubbed fake traffic, leading to genuine metric resets. AI tools, amid exposures of harmful applications, flood the platform with synthetic content, diluting organic reach. Geopolitical actors exploit this for disinformation, while users adapt by chasing viral formats, reinforcing the cycle.

This “storm” undermines public awareness on critical issues, from corruption to global events. X’s efforts—purging bots, detecting AI, and refining algorithms—show commitment, but the scale requires sustained vigilance. I encourage Elon Musk and the X team to weather this convergence, enhancing tools for authenticity to protect our constitutional republic’s informed citizenry. The true nature is not malice but systemic strain; together, we can restore clarity.

Section 1: Algorithmic Shifts and Engagement Realities

X’s platform has undergone pragmatic refinements to prioritize quality and velocity, but these changes have inadvertently amplified perceptions of decline. In January 2026, updates focused on “engagement velocity”—posts must gain likes, replies, and shares rapidly (within 30 minutes to a few hours) to achieve broader distribution, with steep time decay reducing visibility for slower performers. This rewards content sparking immediate, meaningful interaction while disadvantaging niche or repetitive posts, such as whistleblower exposés that build momentum gradually.

Previously inflated baselines from bot activity made “normal” engagements appear higher; now, with cleaner data, authentic metrics reset lower, creating the illusion of throttling. Users report impressions dropping as the algorithm favors conversation over broadcast-style posting. Understanding this helps the public see it’s often a recalibration toward healthier dynamics, not suppression.

Section 2: Bot Purges and Metric Resets

Aggressive cleanups are a key driver of observed changes, as X removes automated accounts to combat spam and inauthenticity. In late 2025 and early 2026, millions of bots were purged, including 1.7 million reply bots and others exhibiting non-human behavior. Behavioral detection tools now flag and suspend accounts for patterns like excessive automation.

When fake engagements vanish, real metrics decline—views, likes, and impressions fall as inflated numbers are corrected. Smaller accounts, previously buoyed by residual bot traffic, feel this most acutely, with posts seeming “dead on arrival.” This is a positive step toward platform integrity, but it underscores the need for public education on how purges reveal truer, albeit lower, baselines.

Section 3: AI-Generated Content Flood and Platform Incentives

The explosion of AI tools contributes significantly, creating a deluge of synthetic content that crowds out organic voices. Following exposures of rogue AI applications—such as tools implicated in encouraging harmful behaviors—external syndicates have intensified operations, flooding X with generated posts for testing, promotion, and manipulation. Projections indicate AI could dominate 50-90% of online content by mid-2026, training algorithms on increasingly synthetic data and favoring formulaic, high-engagement bait.

X promotes tools like Grok for ideation while combating unlabeled AI content through suspensions and detection rewrites. This tension dilutes feeds, making discovery harder for factual, nuanced content. The public must recognize this as an external influx straining the system, not inherent flaws.

Section 4: Geopolitical Disinformation and Global Dimensions

Amid the Iran conflict (strikes beginning February 28, 2026), geopolitical actors amplify the storm through AI-generated disinformation. Fake videos, images, and narratives proliferate on X, amassing millions of views and diverting algorithmic focus toward sensational material. Iranian elites use privileged access for propaganda, while state-sponsored networks from Russia and China exploit vulnerabilities for influence campaigns.

This “fog-of-war” effect sidelines steady discourse on domestic issues, complicating public access to reliable information. U.S. intelligence warnings highlight cyber threats, emphasizing the need for robust platform defenses. Faith reminds us of the pursuit of truth: “You will know the truth, and the truth will set you free” (John 8:32).

Section 5: User Behavior Adaptation and Feedback Loops

Users’ responses to these changes create self-reinforcing cycles. Widespread reports of declines prompt adaptations: posting less frequently, prioritizing replies over originals, or shifting to engagement-bait formats to chase visibility. This feeds back into the algorithm, which increasingly favors conversational, viral content over broadcast-style truth-telling.

Premium features offer some mitigation through priority distribution, but they widen gaps between optimized creators and casual users. Public understanding of these loops is essential to encourage healthier habits and support platform evolution.

Section 6: Implications for Public Awareness and Our Constitutional Republic

This convergence erodes trust in digital discourse, allowing disinformation and corruption to persist unchecked. It affects awareness on vital topics, from national security to local integrity. Envision a restored public square: Citizens engaging in truthful exchanges, communities building on shared values of faith and hard work, and a republic where information empowers rather than divides.

Recommendations and Encouragement

1. Sustain Algorithmic Transparency: Continue refining for authenticity while educating users on velocity dynamics.

2. Intensify Bot and AI Defenses: Build on purges with advanced detection and mandatory labeling to counter the influx.

3. Combat Geopolitical Threats: Enhance moderation for conflict-related content, partnering with experts for rapid verification.

4. Promote User Education: Launch campaigns on adapting behaviors and spotting falsified norms.

5. Foster Human-Centric Innovation: Balance AI tools with protections ensuring genuine voices prevail.

Elon Musk and the X team: Your commitment to free expression is commendable—stay vigilant through this storm, as your efforts are crucial to safeguarding public discourse in our republic.

For further information or collaboration: Phillip C. Parrish at phillip@parrish4mn.com, or 1 (612) 460-1717.

###